If a 3-cycle branch instruction (i.e. a taken branch not crossing a page boundary) ends in the cycle before the 6502 would normally start the 7-cycle interrupt execution, the 6502 executes another full instruction and starts with interrupt handling after that. I tested this both with IRQs and NMIs, and the results were identical.

With all other instructions I tested so far, including 2-cycle branches (i.e. branch not taken) and 4-cycle branches (i.e. branch taken crossing a page boundary) the behaviour is as expected: the instruction finishes and the CPU starts with interrupt handling immediately in the next cycle.

The Atari XL and XE series use an extended NMOS 6502 CPU, but AFAIK the only changes to the original NMOS 6502 was the addition of some halt logic and bus isolation circuits.

AtariAge user bob1200xl also verified this on an Atari 800, which uses a standard 6502 and has the bus-isolation built with discrete logic. So I assume that other NMOS 6502 might also be affected by this "bug". A test on a 65816 didn't show this behaviour, so I guess the CMOS variants could have been fixed.

My question now is: has anyone experienced such behaviour before and maybe knows what's going on inside the NMOS 6502?

We are currently discussing this issue over on the AtariAge forum in this thread but are kind of clueless. We suspected that there might be going on something strange at the transition of T2 of the branch instruction to T0 that might block interrupt execution in T0. But this is just a wild guess.

Now for some more background information:

I wrote a simple test program for my Atari which basically just aligns some code so that the last cycle of an instruction executes just before the interrupt fires. This code is followed by some dummy instructions (I used "LDA #1", "LDA #2", "LDA #3"). The interrupt handler then just reads the return-address from the stack and stores it, and by looking at the code at this address (either pointing to "LDA #1" or "LDA #2") I know where the interrupt occured.

The test code looks like this (interrupt setup and most of the code alignment has been done before):

Code: Select all

SEC

LDX ZPTEMP

LDX ZPTEMP

BCS CBCST1

CBCST1

; <-- interrupt should occur here

LDA #1

; <-- but actually is executed here

LDA #2

LDA #3An now the interrupt handler:

Code: Select all

; IRQ code: store the return address

MYIRQ PHA

TXA

PHA

TSX

LDA $0104,X

STA RETADR

LDA $0105,X

STA RETADR+1

LDA #0

STA IRQEN

PLA

TAX

PLA

RTIFirst version, using the vertical blank NMI: http://www.horus.com/~hias/tmp/vbitest-1.0.zip

Second version, testing with vertical blank NMI and pokey timer IRQ, also test for 4-cycle branch crossing page included:

http://www.horus.com/~hias/tmp/inttest-1.0.zip

Another note: on the Atari the Pokey IRQ timing is quite tight, Pokey asserts the IRQ quite late during phase 2, so on some computers the IRQ setup time for this cycle is not met and everything is delayed by 1 cycle (so the 6502 executes the next instruction in all cases, as it only "saw" the interrupt being asserted for one cycle). On the Ataris the NMI timing is a lot more reliable as the Antic asserts NMI early during phase 1. You might need to take care of similar effects when you try to repoduce it on other systems.

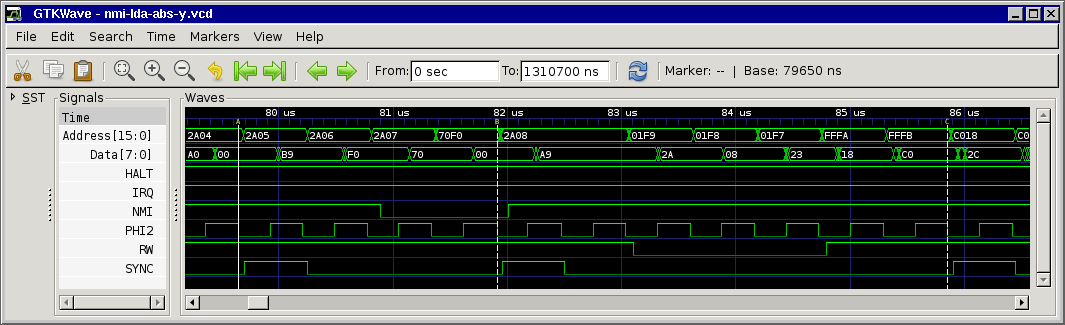

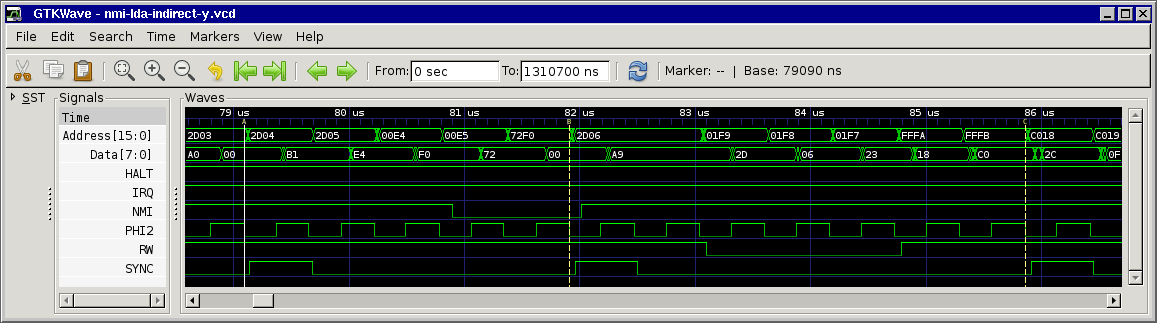

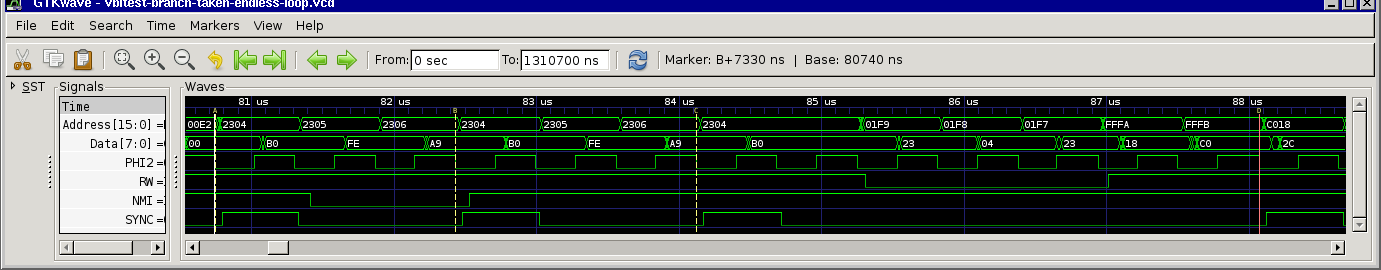

I hooked up my logic analyzer to check what was going on. First a screenshot of the expected behaviour (using a 2-cycle not-taken branch):

At ~81us the CPU fetches the BCS opcode and NMI goes low. At the end of this cycle the CPU recorded the first-low-cycle of NMI. In the next cycle the CPU fetches the offset, NMI is still low and the CPU records the second-low-cycle so interrupt execution can start. Then, in the next cycle, the 7-cycle interrupt execution starts.

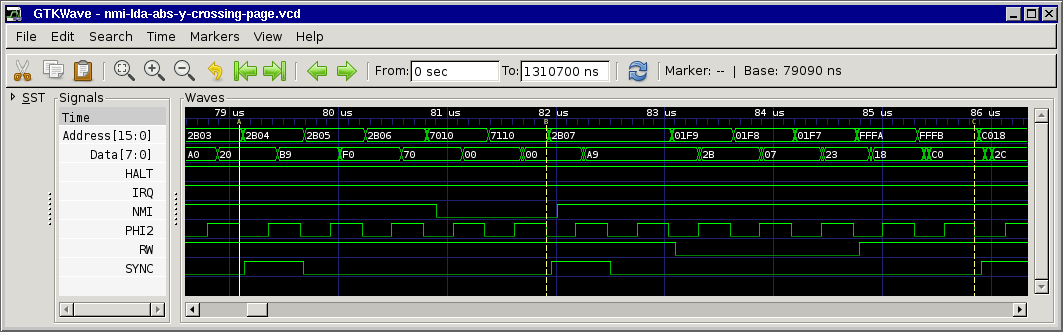

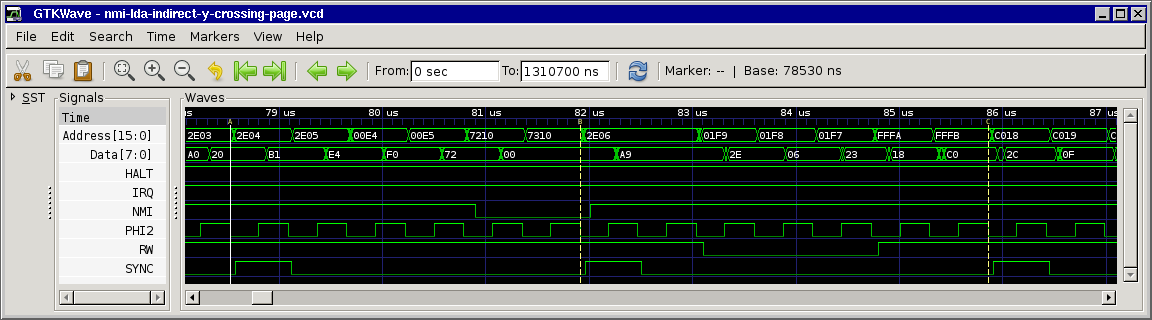

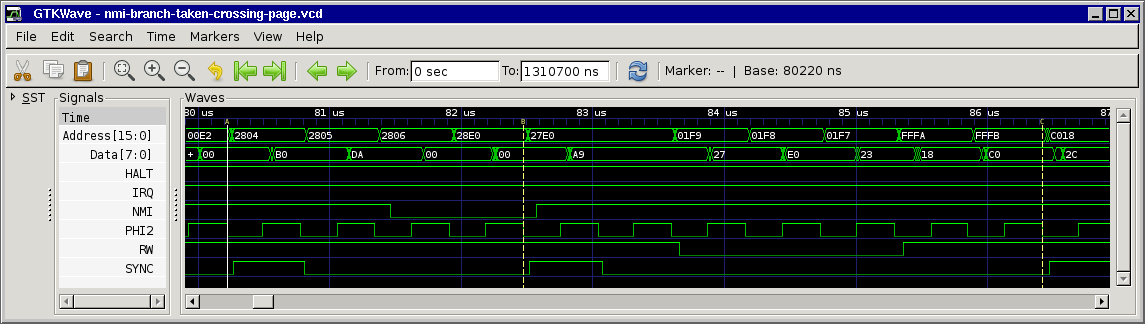

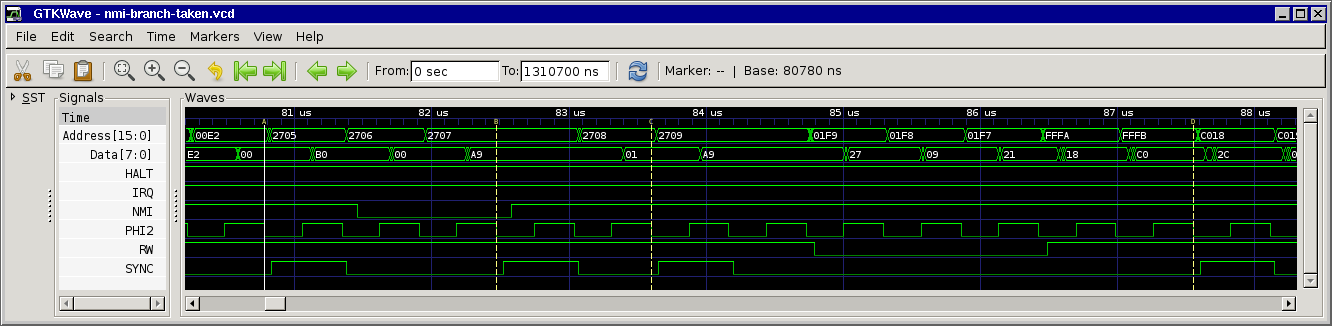

Now the screenshot of the 3-cycle branch leading to the execution of another instruction:

At ~81us CPU fetches BCS opcode, in the next cycle the offset, also NMI goes low, then the CPU executes the branch, and now the NMI should be serviced. But the CPU fetches the next opcode ("A9" / "LDA #"), and the data ("01"), and after that starts with the 7-cycle interrupt sequence.

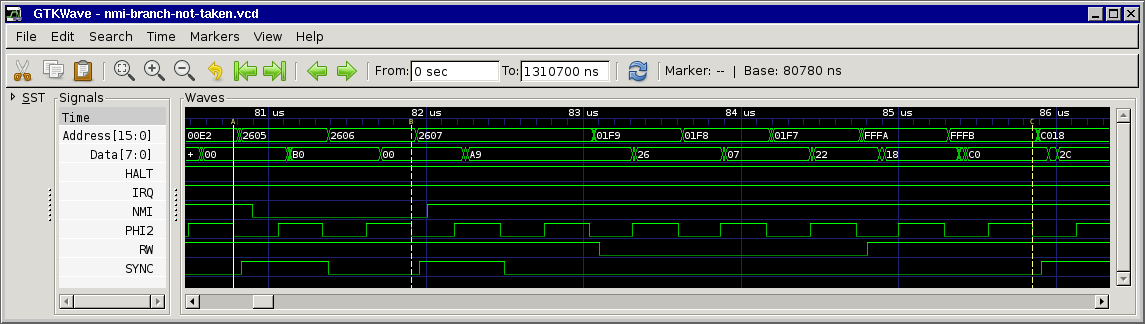

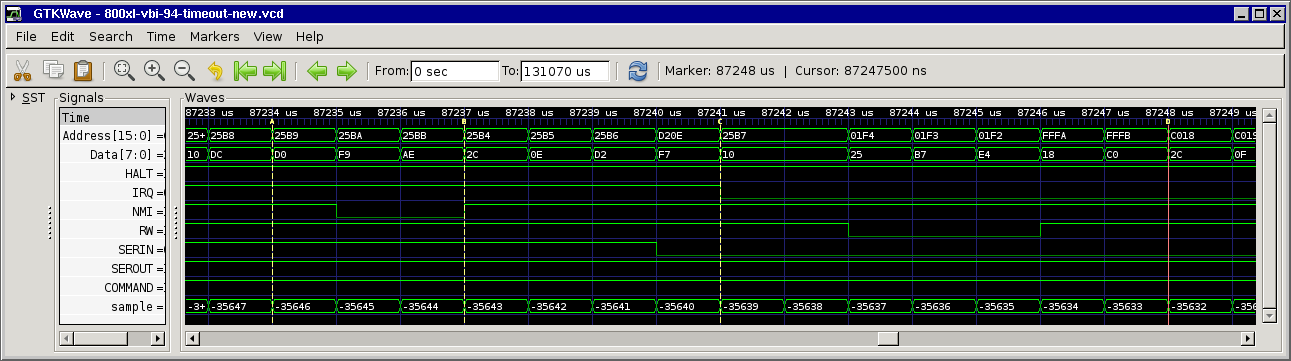

Here's another screenshot from my real-world code (sampled at Atari clock speed at the falling edge of PHI2 - ignore the timestamps at the top, they are a dummy 1us per sample). First there's a BNE backwards, followed by a "BIT $D20E" executed instead of the NMI handler:

I did some research, but the only thing I found was a little thing in the MOS hardware manual: On page A-13 in the description of the branch instruction the (dummy) opcode fetches in states T2 and T3 didn't have word "discarded" with them (as the other dummy reads have), and the T0 cycle afterwards wasn't mentioned. But this could also be some simple typos and don't have to mean anything.

I would be quite interested if someone could tell more about this issue, and (just out of curiosity) if other 6502 variants like the 6510 are affected by this, too.

so long,

Hias