What do you think , if memory application cache would have , if so, what impact on improving performance?

And what scheme of its operation would be the best ?

6502 and CACHE memory. Is it possible?

Re: 6502 and CACHE memory. Is it possible?

A good old-fashioned 6502 system can't really benefit from cache, because memory accesses are already single-cycle. (Unless you have a slow ROM with wait-states...)

But, when the clock of the CPU exceeds the access time of the memory, yes, I think there are possibilities for benefit. Even the 14MHz parts we buy are not really too fast for memory - which is to say, we can buy adequately fast memories. So, it's the realm of the FPGA implementation where caches could be interesting: the CPUs can run at 50MHz up to 100MHz or so, and it's difficult to built a memory system quite as fast as that. But there's more: there's scope for putting some accesses in parallel, such as the two bytes read and written for JSR and RTS, or the two bytes read for indirect accesses, or indeed the two or three operand bytes of each opcode. And indeed, there's scope to run the instruction fetching in parallel with the write-back of stores, to improve the pipelining.

As far as I know, no-one has tackled these ideas yet in practice.

There's another case: Shane Gough's emulated 6502 on ARM has very slow access to main memory over an SPI connection. There's scope there for some local memory or caching. See

viewtopic.php?f=1&t=3146

But, when the clock of the CPU exceeds the access time of the memory, yes, I think there are possibilities for benefit. Even the 14MHz parts we buy are not really too fast for memory - which is to say, we can buy adequately fast memories. So, it's the realm of the FPGA implementation where caches could be interesting: the CPUs can run at 50MHz up to 100MHz or so, and it's difficult to built a memory system quite as fast as that. But there's more: there's scope for putting some accesses in parallel, such as the two bytes read and written for JSR and RTS, or the two bytes read for indirect accesses, or indeed the two or three operand bytes of each opcode. And indeed, there's scope to run the instruction fetching in parallel with the write-back of stores, to improve the pipelining.

As far as I know, no-one has tackled these ideas yet in practice.

There's another case: Shane Gough's emulated 6502 on ARM has very slow access to main memory over an SPI connection. There's scope there for some local memory or caching. See

viewtopic.php?f=1&t=3146

-

White Flame

- Posts: 704

- Joined: 24 Jul 2012

Re: 6502 and CACHE memory. Is it possible?

One could consider zero page to play a vaguely similar role as cache in other larger architectures. While regular cache dynamically places data into faster-access memory based on recent usage, 6502 programming usually statically allocates data into faster-access memory.

But the biggest difference is that our "slow" vs "fast" memory access is only 1 cycle each. As BigEd mentions, the situation would need to change for cache to really be appropriate, and the way it changes to get into that realm dictates what role cache would play.

But the biggest difference is that our "slow" vs "fast" memory access is only 1 cycle each. As BigEd mentions, the situation would need to change for cache to really be appropriate, and the way it changes to get into that realm dictates what role cache would play.

- barrym95838

- Posts: 2056

- Joined: 30 Jun 2013

- Location: Sacramento, CA, USA

Re: 6502 and CACHE memory. Is it possible?

I am by no stretch of the imagination a hardware expert, but it seems to me that caching page zero and page one could allow a 6502-like cpu to be modified to shorten the external cycle counts for many instructions, due to the elimination of some external reads and writes in favor of potentially much-faster internal ones.

AFAIK, it would be a trivial matter to cache all 64KB (or more) on-chip, and run off an internal clock faster than any external SRAM could handle, only slowing down for memory-mapped I/O as needed. Please forgive me if I'm either stating the obvious or severely mistaken ... my last hardware project was over a quarter of a century ago!

Mike B.

AFAIK, it would be a trivial matter to cache all 64KB (or more) on-chip, and run off an internal clock faster than any external SRAM could handle, only slowing down for memory-mapped I/O as needed. Please forgive me if I'm either stating the obvious or severely mistaken ... my last hardware project was over a quarter of a century ago!

Mike B.

Re: 6502 and CACHE memory. Is it possible?

That's right - the key is that you're talking about a new variant of 6502, presumably on an FPGA. And that would be a fine project - but still there's not much that can be done with an actual 6502.

Re: 6502 and CACHE memory. Is it possible?

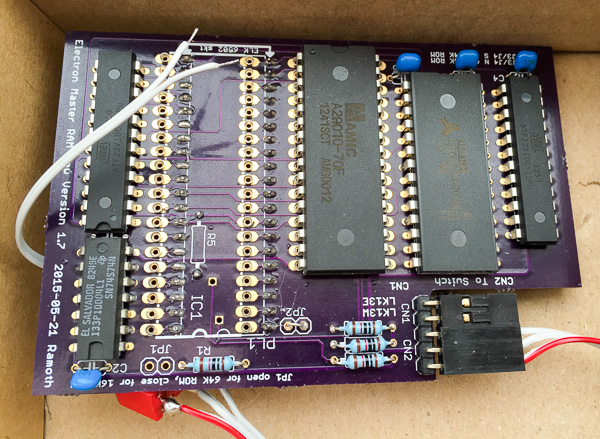

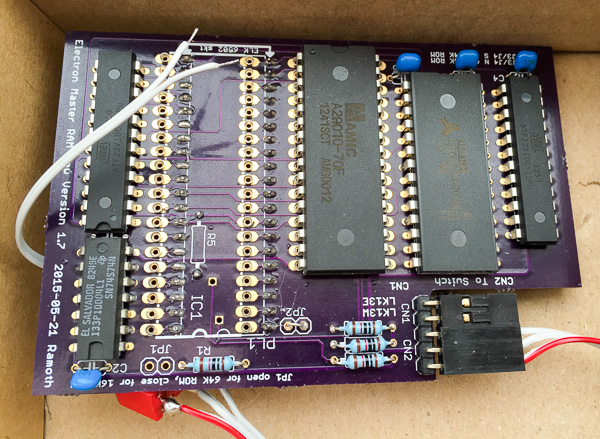

Ah, just thought of another case: Acorn's Electron has nibble-wide RAM which takes two ticks to access, and also has to provide video data. So the CPU is often running below speed (although it can run at full speed from ROM, or when only low resolution graphics are displayed.) There is an accelerator board (or two?) which replaces the CPU and provides some local memory which can run at full speed. (Edit: See http://indosingo7.com/_lain.php?_lain=5 ... urbo_Board)

In theory, a large multi-board memory might need wait states, but I'm not sure if that happened historically, given the increasing size of RAM chips and the limited amount of installed RAM in most 6502 systems. It must have happened in one or two cases. The Micromind from ECD is a possible example:

https://plus.google.com/u/0/10898429046 ... 5qPc1Ugdri

In theory, a large multi-board memory might need wait states, but I'm not sure if that happened historically, given the increasing size of RAM chips and the limited amount of installed RAM in most 6502 systems. It must have happened in one or two cases. The Micromind from ECD is a possible example:

https://plus.google.com/u/0/10898429046 ... 5qPc1Ugdri