Page 12 of 16

Re: My new verilog 65C02 core.

Posted: Sat Nov 14, 2020 12:52 pm

by hoglet

Hi Arlet,

I've hit possibly the most subtle issue yet; it depends on the combination of:

- RDY being used (this is the most important pre-condition)

- Read-Modify-Write to ZP (e.g. ASL, ROL)

- Decimal Mode (for ASL anyway)

The bug results in the N flag being inconsistent with the result.

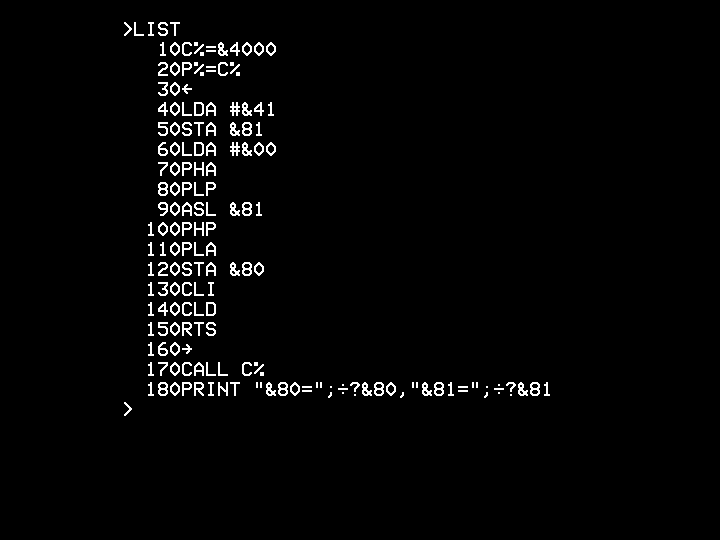

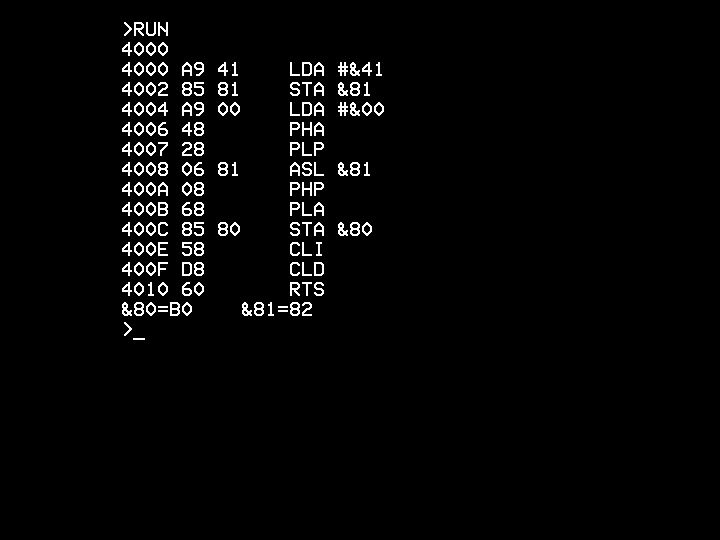

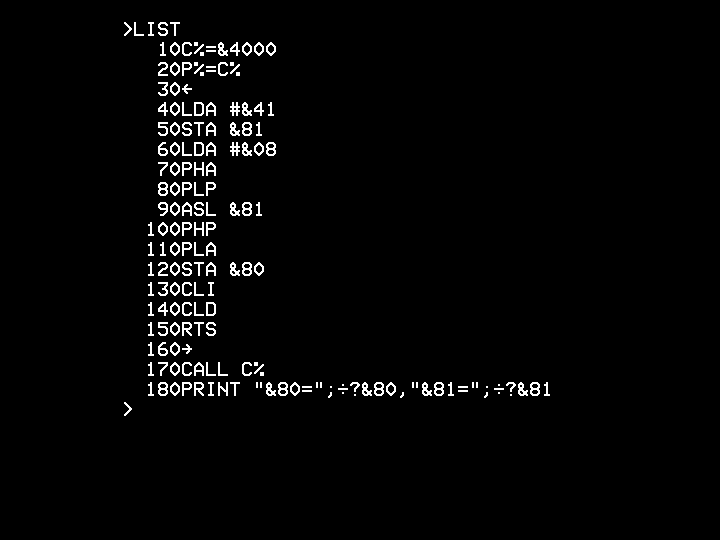

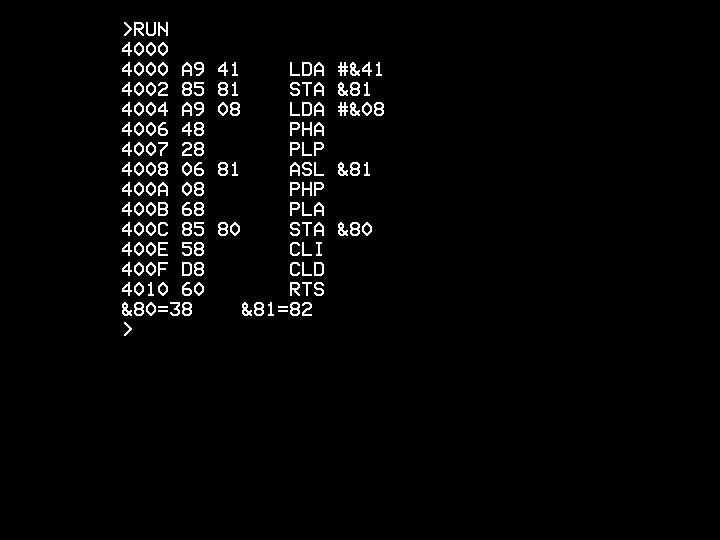

In following tests, location &80 is the flags after the ASL, and bit 7 (the N flag) should always match bit 7 of the result (in &81).

First, with decimal mode off:

- capture11.png (2.29 KiB) Viewed 1283 times

- capture12.png (2.45 KiB) Viewed 1283 times

Then, with decimal mode on:

- capture9.png (2.3 KiB) Viewed 1283 times

- capture10.png (2.46 KiB) Viewed 1283 times

I think I'm correctly following your suggested pattern for using RDY with syncronous memory. I also checked your implementation of RDY, and this looks solid to me. Every use of posedge clk is qualified by RDY, and this is the only use of RDY.

This is with the generic version of your core (I haven't tried the spartan6 version for this failure)

Looking at the microcode, there does seem to be an unexpected (to me) difference between &14C and &1CC

Code: Select all

@14C 000_00001_01_000_0111_10011_00_00101100 // ASL, NZC -> @12C

@1CC 100_00001_01_000_0111_10011_00_00011111 // ASL, NZC -> @11F

If I change the test code to use ROL, then I see failures in both cases (i.e. N=0 in both cases, even though the result is &82).

The microcode for ROL is:

Code: Select all

@152 100_00001_01_000_0111_10011_10_00011111 // ROL, NZC -> @11F

@1D2 100_00001_01_000_0111_10011_10_00011111 // ROL, NZC -> @11F

Any ideas?

Should all of these routines finish at &12C/&1AC?

Dave

Re: My new verilog 65C02 core.

Posted: Sat Nov 14, 2020 1:29 pm

by Arlet

Do you know how the RDY input is behaving ?

Re: My new verilog 65C02 core.

Posted: Sat Nov 14, 2020 1:38 pm

by Arlet

I haven't been able to reproduce it yet, but I suspect I know what it is, and it is related to the microcode differences you're seeing.

Re: My new verilog 65C02 core.

Posted: Sat Nov 14, 2020 1:57 pm

by hoglet

Do you know how the RDY input is behaving ?

That's a bit hard to determine exactly.

This is in a project called BeebAccelerator, which replaces the 6502 in the Beeb with a Xilinx XC6SLX9 FPGA (currently the same hardware as my ICE. For testing with your new core, the FPGA maps 0x0000-0x2FFF, 0x8000-0xFBFF and 0xFF00-0xFFFF to "internal" block RAM.

The core is currently running at 50MHz. If cycle is deemed "internal" then RDY is kept high and the access is served from block RAM. If a cycle is deemed external, then RDY is taken low immediately, until the Beeb's 6502 clock is at an appropriate phase, when RDY is taken high again to allow the external cycle to complete.

With the test case, zero page and stack accesses are internal, but all code fetches are external (&4xxx is in external memory). So RDY will be low most of the time, which just occasional blips high for 1-2 cycles.

However, I still see the bug if I run the code at &2xxx, which is internal memory. In this case, I would expect RDY to be high most of the time. But that depends on what address your core puts out in dead cycles.

Dave

Re: My new verilog 65C02 core.

Posted: Sat Nov 14, 2020 2:02 pm

by Arlet

The only dead cycle is a double opcode fetch (from the correct address) in the PHA/PLA instructions.

I'm having trouble reproducing it, probably because of differences in the memory interface, so I will just apply the fix that I have in mind, and then you can let me know if it works.

Re: My new verilog 65C02 core.

Posted: Sat Nov 14, 2020 2:26 pm

by Arlet

Hopefully fix in github.

Re: My new verilog 65C02 core.

Posted: Sat Nov 14, 2020 2:43 pm

by hoglet

With those changes, the system doesn't boot at all,

Let me switch back to the ICE and see if I can get a bit more info on where it's now failing.

Dave

Re: My new verilog 65C02 core.

Posted: Sat Nov 14, 2020 3:03 pm

by hoglet

It looks like it's crashed here:

Code: Select all

DA20 : 26 FC : ROL $FC

DA22 : 20 EB EE : JSR $EEEB

Bus cycles are:

Code: Select all

0 26 1 1 1

1 fc 0 1 1

2 18 0 1 1

3 30 0 0 1

DA20 : 26 FC : ROL FC : 4

0 20 1 1 1

1 20 0 1 1

2 da 0 0 1

3 23 0 0 1

4 eb 0 1 1

DA22 : 20 20 EB : JSR EB20 : 5

The bus cycles for the JSR look all wrong, it looks like the PC has failed to increment after the opcode, so the wrong address is called, and the wrong return address (off by one) is pushed.

Dave

Re: My new verilog 65C02 core.

Posted: Sat Nov 14, 2020 3:11 pm

by Arlet

Ok, I forgot to update address mode bits when copy/pasting lines in microcode. Fixed.

I guess Klaus doesn't do JSR after ROL

Re: My new verilog 65C02 core.

Posted: Sat Nov 14, 2020 3:45 pm

by hoglet

Thanks Arlet, that now boots again and seems to have sorted the RDY original problem.

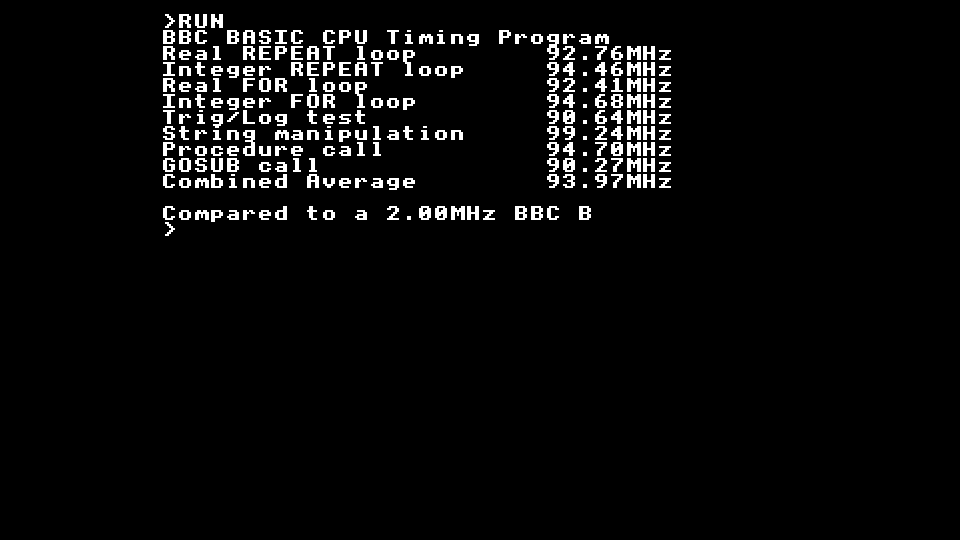

Here's it running with an 80MHz clock:

- capture14.png (1.83 KiB) Viewed 1273 times

The generic version doesn't quite meet timing at 80MHz:

Code: Select all

Paths for end point Mram_ram1 (RAMB16_X0Y8.ADDRB7), 2365 paths

--------------------------------------------------------------------------------

Slack (setup path): -0.043ns (requirement - (data path - clock path skew + uncertainty))

Source: Mram_ram1 (RAM)

Destination: Mram_ram1 (RAM)

Requirement: 12.500ns

Data Path Delay: 12.283ns (Levels of Logic = 5)

Clock Path Skew: 0.000ns

Source Clock: cpu_clk_BUFG rising at 0.000ns

Destination Clock: cpu_clk_BUFG rising at 12.500ns

Clock Uncertainty: 0.260ns

Clock Uncertainty: 0.260ns ((TSJ^2 + TIJ^2)^1/2 + DJ) / 2 + PE

Total System Jitter (TSJ): 0.070ns

Total Input Jitter (TIJ): 0.000ns

Discrete Jitter (DJ): 0.450ns

Phase Error (PE): 0.000ns

Maximum Data Path at Slow Process Corner: Mram_ram1 to Mram_ram1

Location Delay type Delay(ns) Physical Resource

Logical Resource(s)

------------------------------------------------- -------------------

RAMB16_X0Y8.DOB0 Trcko_DOB 2.100 Mram_ram1

Mram_ram1

SLICE_X12Y38.D6 net (fanout=8) 2.733 ram_dout<0>

SLICE_X12Y38.CMUX Topdc 0.456 cpu/abl/AHL<7>

Mmux_cpu_DI11_SW2_F

Mmux_cpu_DI11_SW2

SLICE_X12Y38.A2 net (fanout=1) 0.741 N144

SLICE_X12Y38.A Tilo 0.254 cpu/abl/AHL<7>

cpu/abl/Madd_BUS_0005_GND_5_o_add_9_OUT_Madd_lut<0>

SLICE_X16Y41.A4 net (fanout=1) 0.762 cpu/abl/Madd_BUS_0005_GND_5_o_add_9_OUT_Madd_lut<0>

SLICE_X16Y41.COUT Topcya 0.474 cpu/abl/Madd_BUS_0005_GND_5_o_add_9_OUT_Madd_cy<3>

cpu/abl/Madd_BUS_0005_GND_5_o_add_9_OUT_Madd_lut<0>_rt

cpu/abl/Madd_BUS_0005_GND_5_o_add_9_OUT_Madd_cy<3>

SLICE_X16Y42.CIN net (fanout=1) 0.003 cpu/abl/Madd_BUS_0005_GND_5_o_add_9_OUT_Madd_cy<3>

SLICE_X16Y42.DQ Tito_logic 0.798 cpu/abl/BUS_0005_GND_5_o_add_9_OUT<7>

cpu/abl/Madd_BUS_0005_GND_5_o_add_9_OUT_Madd_cy<7>

cpu/abl/BUS_0005_GND_5_o_add_9_OUT<7>_rt

SLICE_X14Y43.C5 net (fanout=1) 0.465 cpu/abl/BUS_0005_GND_5_o_add_9_OUT<7>

SLICE_X14Y43.CMUX Tilo 0.403 cpu_AB<7>

cpu/abl/Mmux_ADL83_G

cpu/abl/Mmux_ADL83

RAMB16_X0Y8.ADDRB7 net (fanout=24) 2.694 cpu_AB_next<7>

RAMB16_X0Y8.CLKB Trcck_ADDRB 0.400 Mram_ram1

Mram_ram1

------------------------------------------------- ---------------------------

Total 12.283ns (4.885ns logic, 7.398ns route)

(39.8% logic, 60.2% route)

For reference, this is your old core, which I had previously been using, that does just make timing:

Code: Select all

Paths for end point Mram_ram8 (RAMB16_X1Y4.ADDRB10), 66 paths

--------------------------------------------------------------------------------

Slack (setup path): 0.574ns (requirement - (data path - clock path skew + uncertainty))

Source: cpu/state_FSM_FFd3 (FF)

Destination: Mram_ram8 (RAM)

Requirement: 12.500ns

Data Path Delay: 11.701ns (Levels of Logic = 3)

Clock Path Skew: 0.035ns (0.741 - 0.706)

Source Clock: cpu_clk_BUFG rising at 0.000ns

Destination Clock: cpu_clk_BUFG rising at 12.500ns

Clock Uncertainty: 0.260ns

Clock Uncertainty: 0.260ns ((TSJ^2 + TIJ^2)^1/2 + DJ) / 2 + PE

Total System Jitter (TSJ): 0.070ns

Total Input Jitter (TIJ): 0.000ns

Discrete Jitter (DJ): 0.450ns

Phase Error (PE): 0.000ns

Maximum Data Path at Slow Process Corner: cpu/state_FSM_FFd3 to Mram_ram8

Location Delay type Delay(ns) Physical Resource

Logical Resource(s)

------------------------------------------------- -------------------

SLICE_X15Y40.CQ Tcko 0.430 cpu/state_FSM_FFd29

cpu/state_FSM_FFd3

SLICE_X23Y44.A1 net (fanout=4) 2.053 cpu/state_FSM_FFd3

SLICE_X23Y44.A Tilo 0.259 N235

cpu/state__n16181

SLICE_X15Y44.B3 net (fanout=2) 1.437 cpu/_n1618

SLICE_X15Y44.B Tilo 0.259 cpu/Mmux_AB1012

cpu/Mmux_AB101311

SLICE_X21Y47.A6 net (fanout=16) 1.308 cpu/Mmux_AB10131

SLICE_X21Y47.A Tilo 0.259 cpu_AB<12>

cpu/Mmux_AB612

RAMB16_X1Y4.ADDRB10 net (fanout=25) 5.296 cpu_AB_next<10>

RAMB16_X1Y4.CLKB Trcck_ADDRB 0.400 Mram_ram8

Mram_ram8

------------------------------------------------- ---------------------------

Total 11.701ns (1.607ns logic, 10.094ns route)

(13.7% logic, 86.3% route)

The spartan6 version is a tad faster and meets timing at 80MHz, but not by much:

Code: Select all

Paths for end point Mram_ram7 (RAMB16_X1Y4.ADDRB12), 23337 paths

--------------------------------------------------------------------------------

Slack (setup path): 0.072ns (requirement - (data path - clock path skew + uncertainty))

Source: Mram_rom16 (RAM)

Destination: Mram_ram7 (RAM)

Requirement: 12.500ns

Data Path Delay: 12.149ns (Levels of Logic = 6)

Clock Path Skew: -0.019ns (0.185 - 0.204)

Source Clock: cpu_clk_BUFG rising at 0.000ns

Destination Clock: cpu_clk_BUFG rising at 12.500ns

Clock Uncertainty: 0.260ns

Clock Uncertainty: 0.260ns ((TSJ^2 + TIJ^2)^1/2 + DJ) / 2 + PE

Total System Jitter (TSJ): 0.070ns

Total Input Jitter (TIJ): 0.000ns

Discrete Jitter (DJ): 0.450ns

Phase Error (PE): 0.000ns

Maximum Data Path at Slow Process Corner: Mram_rom16 to Mram_ram7

Location Delay type Delay(ns) Physical Resource

Logical Resource(s)

------------------------------------------------- -------------------

RAMB16_X1Y6.DOA0 Trcko_DOA 2.100 Mram_rom16

Mram_rom16

SLICE_X15Y45.D5 net (fanout=1) 3.001 N341

SLICE_X15Y45.D Tilo 0.259 cpu/abh/ABH<3>

inst_LPM_MUX711

SLICE_X15Y45.A3 net (fanout=1) 0.359 rom_dout<7>

SLICE_X15Y45.A Tilo 0.259 cpu/abh/ABH<3>

Mmux_cpu_DI81

SLICE_X13Y44.A6 net (fanout=7) 0.405 cpu_DI<7>

SLICE_X13Y44.A Tilo 0.259 cpu/abl/base<4>

cpu/abl/adl_base_mux/mux8_3[7].mux8_3

SLICE_X12Y43.D5 net (fanout=1) 0.423 cpu/abl/base<7>

SLICE_X12Y43.DMUX Topdd 0.555 cpu_AB_next<7>

cpu/abl/abl_add/add[7].add/LUT5

cpu/abl/abl_add/carry_h

SLICE_X14Y43.A4 net (fanout=8) 0.543 cpu/abl_co

SLICE_X14Y43.COUT Topcya 0.495 cpu_AB<10>

cpu/abh/adh_add/add[0].add/LUT5

cpu/abh/adh_add/carry_l

SLICE_X14Y44.CIN net (fanout=1) 0.003 cpu/abh/adh_add/COL<3>

SLICE_X14Y44.AMUX Tcina 0.210 cpu_AB<15>

cpu/abh/adh_add/carry_h

RAMB16_X1Y4.ADDRB12 net (fanout=25) 2.878 cpu_AB_next<12>

RAMB16_X1Y4.CLKB Trcck_ADDRB 0.400 Mram_ram7

Mram_ram7

------------------------------------------------- ---------------------------

Total 12.149ns (4.537ns logic, 7.612ns route)

(37.3% logic, 62.7% route)

If you update the microcode for the spartan6 version with these recent fixes I'll give that a try in the Beeb as well.

Dave

Re: My new verilog 65C02 core.

Posted: Sat Nov 14, 2020 3:53 pm

by Arlet

spartan 6 fix in github.

Re: My new verilog 65C02 core.

Posted: Sat Nov 14, 2020 4:00 pm

by Arlet

Timing looks to be dominated by long paths to/from block RAM. Did you run smartexplorer ?

The path you quoted for the old core is not representative, because it's an unusually small bit of logic that happened to get really long routing delays.

An issue that the new core suffers from is that it needs to be tied to a ROM for the microcode, which limits the routing options. Hopefully it will improve with the distributed version.

Re: My new verilog 65C02 core.

Posted: Sat Nov 14, 2020 4:22 pm

by Arlet

The ASL+RDY+SED problem was because of an earlier method that I came up with for calculating the NZ flags after a read-modify-write. The problem is that the ALU result happens a cycle earlier than other instructions, because the result still needs to be written back to memory, and then the next opcode needs to be fetched.

My solution was to snoop on the data bus during the write cycle, and grab that data to determine the NZ flags (the C flag already needs to be stored in a flop for BCD carry)

Later, I came up with a better method, where I just let the ALU perform the same operation for 2 cycles in a row, and then use the 1st one to write the data to memory, and use the 2nd cycle for the flags. I tested that method with binary ASL, then did INC/DEC, but didn't do the rest until later. But then with all the porting back and forth between generic/spartan, the changes must have gotten lost.

And apparently, snooping the write cycle doesn't work properly if you use RDY.

Re: My new verilog 65C02 core.

Posted: Sat Nov 14, 2020 4:36 pm

by hoglet

The Spartan6 microcode changes seem to work.

Timing looks to be dominated by long paths to/from block RAM. Did you run smartexplorer ?

No I haven't - it's not a tool I'm particularly familiar with.

Am I likely to see much of a difference?

The path you quoted for the old core is not representative, because it's an unusually small bit of logic that happened to get really long routing delays.

Understood.

When you use large block RAMs, the system speed does seem to be dominated by routing delays associated with high fanout nets used to construct the large RAM.

An issue that the new core suffers from is that it needs to be tied to a ROM for the microcode, which limits the routing options. Hopefully it will improve with the distributed version.

Yes, this is quite painful in Beeb Accelerator, as I would like to use the full 64K. The Xililx tools don't work well with inferred RAMs that are not a power of 2. So if you try to inferr a 62K RAM (leaving 2K for the microcode ROM), it ends up overallocating and then fails. So to try out your core I've had to constuct the memory myself (currently using a 16K and 32K chunks and an additional mux). This may also be impacting performance.

So I'm very much looking forward to the option of the microcode being implemented as distributed RAM.

Anyway, keep up the good work, it's been fun debugging these (minor) issues.

Dave

Re: My new verilog 65C02 core.

Posted: Sat Nov 14, 2020 4:40 pm

by hoglet

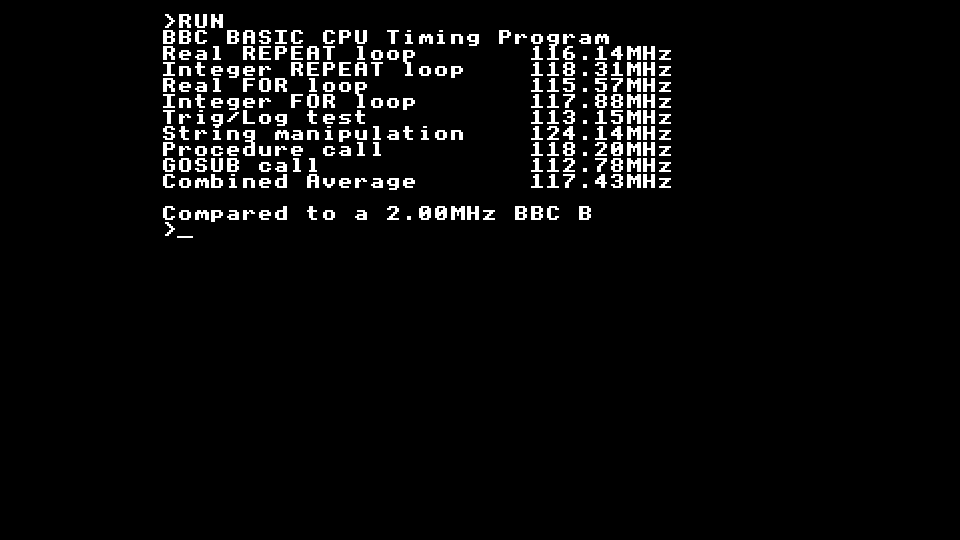

Oh, and one last thing.

Even though it doesn't come close to meeting timing at 100MHz, it does in fact run, and appears very stable:

- capture15.png (1.84 KiB) Viewed 1262 times

The 15% CPI increase is nice to have.

And these are the slower -2 parts....

What speed parts have you been using?

Dave