ie the timing may be spec'd worst case but how can they know

how much capacitance you're driving.

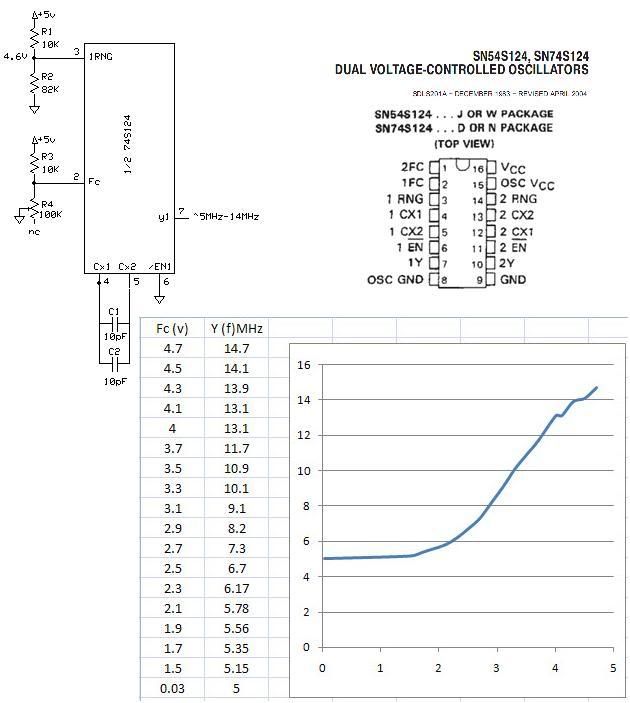

The C64, IIRC, had a lot of LS logic whose inputs require current, and that current will discharge the capacitances. But if you use all CMOS, there's essentially no current (it's guaranteed to not be over 2µA per pin, and that's at a temperature extreme); then no matter how much or how little capacitance you have (it will always be some positive, non-zero amount), an undriven line will hold its logic state. With the 2µA maximum leakage and 5pF normal capacitance per pin, even before including the capacitance of the socket and traces, it will always hold the logic state for at least a microsecond worst case and probably far more. Again that's

if everything is CMOS, which I don't think the C64 was. The microsecond is if you only pulled it down to .4V and .8V becomes "no man's land," so it really is worst case. 5pF times (.8-.4)V divided by 2µA equals 1µs or 1000ns.

My point is that the spec's are just data points and any design is just

an extrapolation from those data points.

The way things are generally spec'd you don't really have enough

information to characterize a particular design precisely.

Personally I think of MOS Technologies as being (having been)

notorious for their lousy specs.

In the case of WDC they don't specify capacitance (at least that I see),

although maybe that's implicit from some more general specs pertaining

to CMOS.

If I were going to relate it to this thread I'd say it makes a lot more

sense to extrapolate a few ns of data due to bus capacitance than to

hope that there are no spurious reads (which seem implicit in BDD's

ideas) or that spurious reads would cause no problems.